Current Portfolio

The right questions of the right audience using the right methodologies yield insights that:

drive organizational Strategy (Mixed and multi-Methods)

Key questions: Why was a core vertical slowing in growth? What would it take to unlock it?

Audience: Technical buyers hiring technical talent, technical talent

Outcomes: Beyond the host product team (Categories/Search and Match) which implemented both UX and search algorithm changes, teams acting on results included sales and marketing (language and landing pages), analytics (granular volume vs value reporting), and service teams (expanded/altered offerings).

Discussion: This partnership discovery study examined several sources of data from behavioral analytics, discovery and semi-structured qualitative interviews, comparative surveys, content analyses, and focus groups. The project itself had a snowball effect of interest that allowed us to accelerate our efforts, broaden our reach, and increase our impact. Learnings were shared in a two-day workshop as well as a final summary readout that helped prioritize the host product team’s roadmap and spurred cross-functional partner teams to action.

In the learning agenda shown here, core lines of inquiry were research-driven (green) while lines of inquiry that required additional expertise or secondary research included research-accountable (white) and research-consulted (grey) activities.

Upwork, Categories/Search and Match team with key cross-functional partners

Outline of learning agenda.

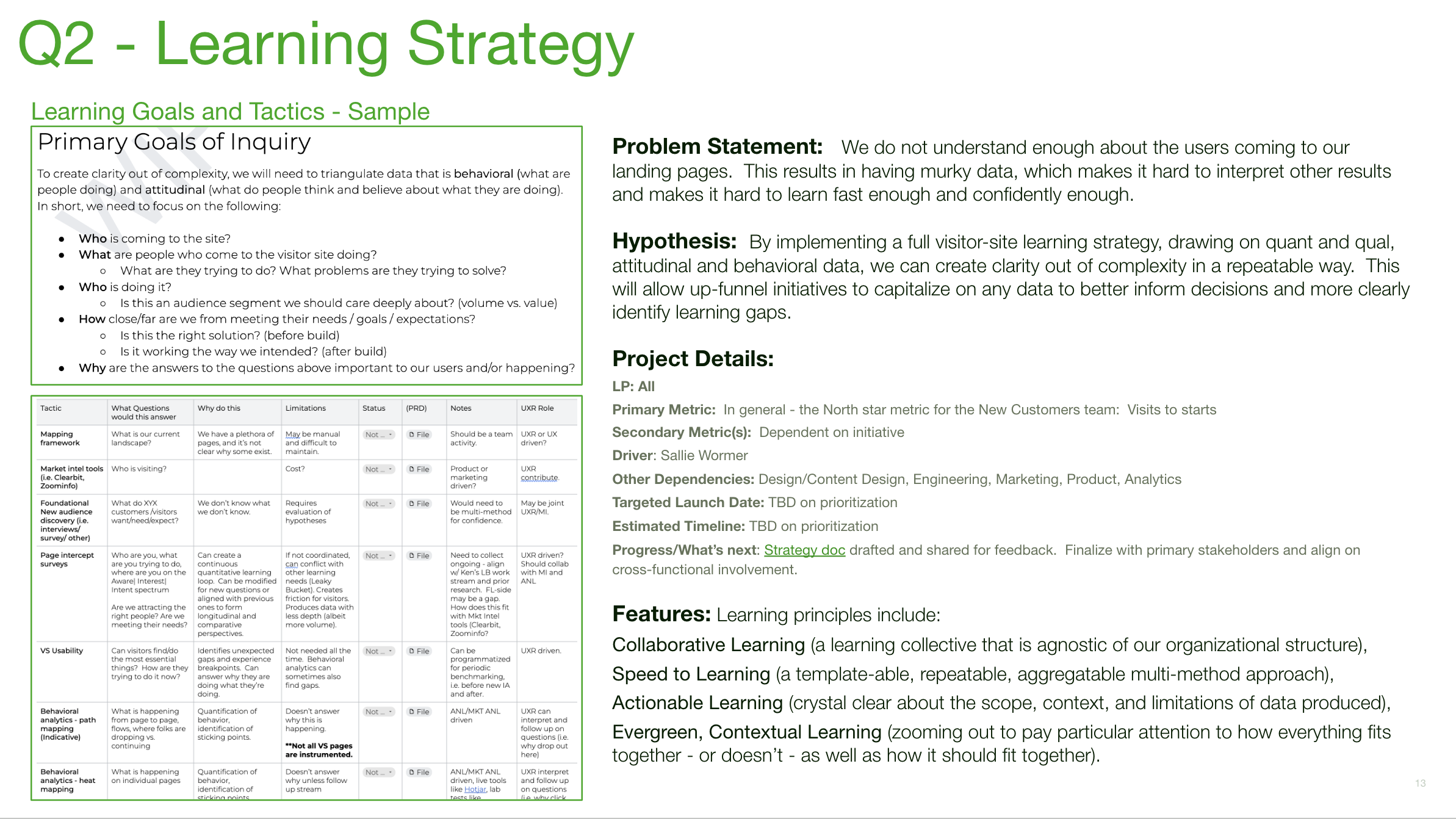

“fly the plane while building” (opportunistic learning strategy)

Team questions: How do prospects perceive the new [page/IA/messaging/___]?

Strategy questions: Who is coming to the visitor site? What are they trying to find/do? Why?

Research strategy goal: How do we create clarity from murky data without slowing the team?

Audience: Visitors (excluding registered users) and target prospects

Outcomes: Results were used immediately in landing page and IA explorations leading to winning tests and improved conversions of ICPs, mid-term in removing old experiments that no longer served Upwork customer needs, and longer term for performance evaluation over time.

Discussion: Upwork’s audience is broad and diverse, with talent of beginner to expert levels in over 180 categories, and clients who have never hired anyone to those who have hired many, in companies that are startups to Fortune 500. In addition, the mix of the two primary audiences is heavily skewed to one side.

By helping product managers, designers and analysts focus on better-formed questions and redirecting their thinking from implementing learning tactics without questions (i.e. ‘we need heat mapping’), we created a scaffolded just-in-time learning strategy that unpacked who, what, why, and how well with a series of smaller studies. Some of these were designed to be left in place (intercept surveys), some were benchmarked by trusted vendors (usability by MeasuringU), and some were built to be reused by non-researchers (unmoderated concept evaluation and tree testing).

Upwork, Growth and marketing teams

Summary (slide) of visitor site strategy, prior to implementation.

identify motivators (behavioral segmentation with attitudinal survey)

Questions: What drives talent to persist and be successful vs. churn? Which defining attributes could be predictive for success or failure? What does the current talent pool look like?

Audience: Six key behavioral groups of talent, from newly registered through decreasing activity

Outcomes: Results were used immediately in new onboarding explorations, mid term for more nuanced and accurate data analysis, and long term in an overall shift in talent strategy.

Discussion: With a large talent base of diverse expertise, success rates, goals and needs, we lacked a quantitive understanding of what actually influenced behaviors and success. For this attitudinal survey exploring beliefs, context and goals, I relied on Data Science colleagues to identify key behavioral segments for recruiting quotas. The between-group analysis correlated behaviors with survey responses, while weighted results demonstrated how larger group behaviors masked smaller but more important ones. We identified attitudinal overlaps which resulted in four primary protopersonas, sized against the current customer base, and two influencing (significant) but contextual nuances by goals and geolocation.

A secondary deliverable was a summary infographic of key highlights, shown here.

Upwork, Talent team

Infographic from attitudinal segmentation study.

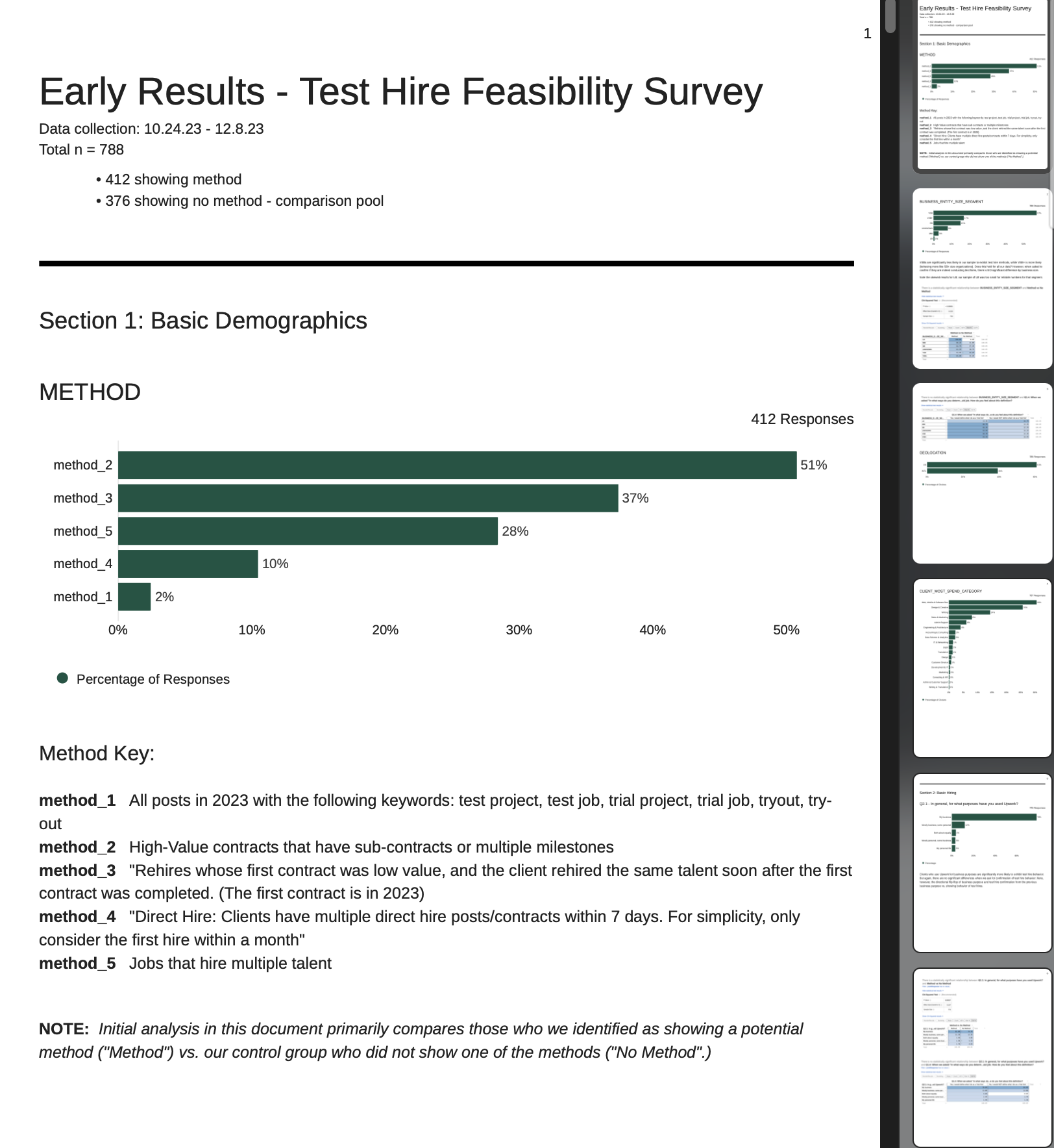

Sample of ‘live’ report of survey data, built in Qualtrics, for faster decision making

inform go-no-go decisions prior to building (survey)

Question: Is there a ‘there’ there? If so, how much?

Audience: Existing buyers exhibiting an observed behavior vs. those who did not

Outcomes: The results yielded no pain points or challenges that were significantly different in the control group vs. the test group. Therefore, there was little value in building or monetizing an additional solution, although there was some educational opportunity for less experienced hiring managers. The product team opted to not pursue the initiative, and offered the idea to the CX team to consider in onboarding education.

Discussion: Prior qualitative studies had indicated some clients chose to hire in more cautious ways, similar to a contract-to-hire but for freelancing positions. Leadership believed this could be a valuable solution having seen these hires eventually yielded larger-than-average revenue, but gave permission to size and more thoroughly investigate the opportunity first. By comparing test and control groups attitudes, rationale, and pain points, the team was able to head off the expense of building a solution without a problem.

As the roadmapping decision was a hot topic and the analysis, while deep, was not overly qualitative, I built a ‘live’ report in Qualtrics during data collection (shown here). The product owner and I monitored this to ensure we had a good cross-comparison, and we effectively had our results and decision immediately on survey closure. I did write a technical report for the final deliverable which was shared up and out.

Upwork, Talent team

create lasting frameworks (digital ethnography and collage)

Questions: What are talent trying to do on their homepage? Why? What is getting in their way?

Audience: Range of talent from new to experienced

Outcomes: The learnings from these studies informed a revamp of the logged-in landing page, an eventual IA redesign proposal, and a framework for more contextually accurate design exploration and evaluations.

Discussion: Behaviorally, we observed that experienced, successful talent were skipping right over the landing page, while new talent were not completing tasks that moved them along their journey. By observing talent use the existing solution as well as discuss what they needed to do, we learned their choices were informed by ‘mode’ they were in as well as their freelancing goals. By layering in learnings from the talent segmentation study (above), it also helped create a fundamental framework for understanding interactions with both product and services.

The high-level jobs-to-be-done overview (shown here) was used as a wayfinding and anchoring mechanism in the final readout, as well as a framework for subsequent studies and end-to-end journey mapping.

Upwork, Talent and Match teams

Talent jobs-to-be-done framework in readout.

restructure a service solution (mixed methods)

Question (original): When is a human essential in our support solution?

Question (resulting): Why have we shipped our org chart with multiple support solutions?

Audience: new and existing customers, both sides of the marketplace

Discussion: The original goal of this study was to identify the drivers that require human customer support. While the study met that goal, we also uncovered a larger organizational problem where multiple unrelated teams owned live support systems, ranging from communities to queryable databases to human agents. Not only did some of these systems provide conflicting advice with other solutions, there were limited structural pathways between where a customer might start a request for help and where they could actually get an answer.

In addition to quantifying the drivers for needing human vs. self-service support via McFadden’s pseudo-R squared regression, existing and overlapping services were mapped for reference (shown here).

Upwork, Trust and Safety Customer Support

Map of overlapping support solutions and access points.

uncover adoption risk (end-to-end scenario/task testing)

Question: How well do our ideas map to user needs and expectations?

Audience: Potential customers, growers and co-op partners

Outcomes: Learnings enabled the team to address multiple critical ‘gotchas’ before development, and to begin to question the Market+ product’s viability.

Discussion: Product had planned on only doing internal “user acceptance testing” for a new multi-sided product launch. I’m grateful the design director supported my push to get the clickable prototype in front of both sets of primary users before development was completed. The timeline was incredibly tight, the time of year made recruiting tricky, and the complexity of the space made the tasks challenging to get real life.

For the first round of E2E testing, I created a rubric for task evaluation for clarity, and employed UMUX-Lite questions to prompt participants to explain their reactions. The team was surprised that the behavioral results (task success) were quite different from their attitudinal responses. For the second set of E2E users, we had multiple challenges with the prototype and pivoted to a concept test with cluster analysis to meet the timeline while still getting useful insights.

IndigoAg, Market+ team

E2E analysis with task success rubric. User group 1.

measure performance over time (UX metrics)

One of the trickiest questions is what influence UX has in supporting an organization’s goals. I started the ball rolling with UX metrics at Upwork with two simple intercepts within the SERP pages – one to rate the perceived relevance of results and one to measure the usability of the interface (UMUX-Lite). The goal was to help the team distinguish where, exactly, challenges in search existed, focus efforts, and periodically remeasure if we saw weirdness in our OKRs again. As these were so successful for the search DS and UX teams, the organization expanded into other parts of the experience. My role came back again years later when we realized that perhaps we had been overzealous in the idea, and needed to map the data teams were collecting against the overall journey.

Shown here are end-to-end journey maps, structured on jobs-to-be-done for our two primary customers (aggregate-level) and with links to various surveys, looker dashboards, and summary funnel analyses or studies at key steps and drop-offs.

Upwork, overall Product and Design

Journey maps with links to existing UX metrics and other data.