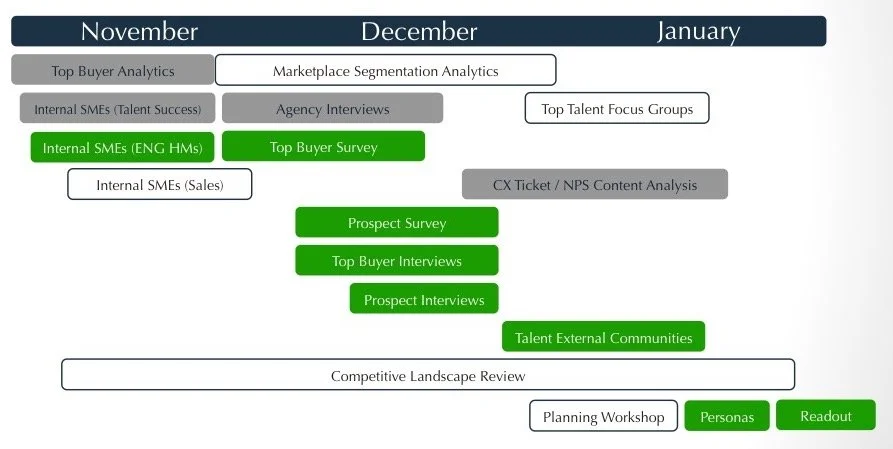

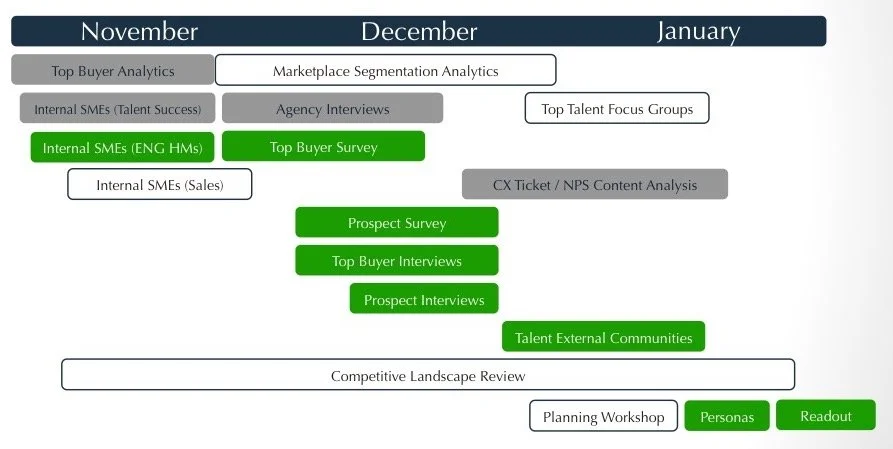

Timeline of multi-methods

Green - Research-led activities were planned and conducted by me, such as the technical SME interviews which informed surveys and additional customer interviews.

White - Research-consulted activities, such as the focus group or analytics questions to answer, were informed or designed by research but led by others.

Grey - Research-requested independent activities, such as the C360 analysis, were nudged into action without oversight.

Because we were able to marshal the troops, we were able to accomplish the data collection in an abbreviated time frame.

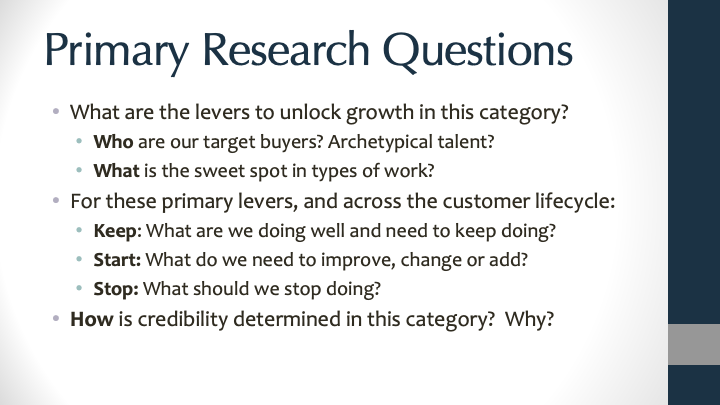

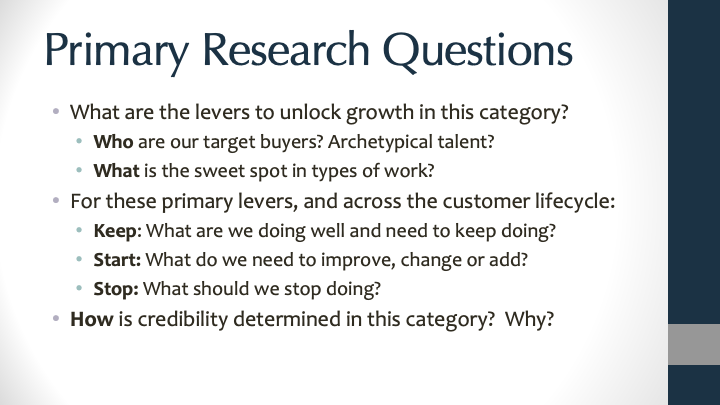

Primary research agenda questions

Each of these questions required different (or multiple) methods. The overall agenda was designed so that findings from individual studies informed the design of subsequent inquiries.

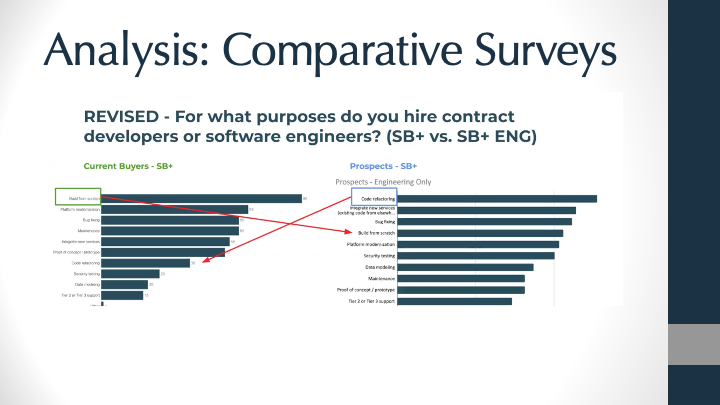

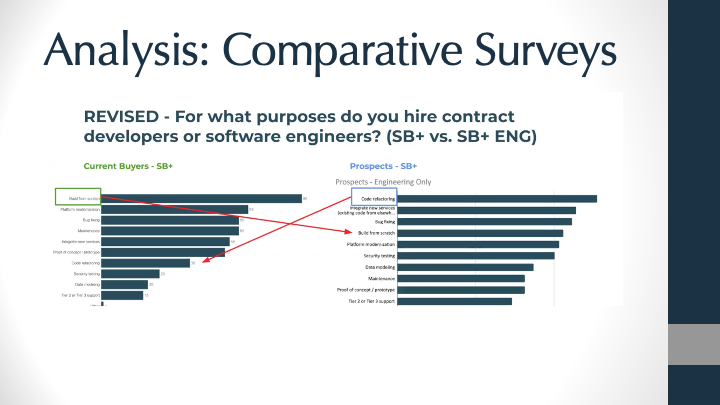

Sample from analysis deck of prospect vs buyer surveys

While our analysts are very happy working with the raw numbers, the designers and PMs needed something more visual to anchor on. We threw together a side by side reference deck, and annotated statistically significant (and interesting) differences. We also split results by business size and role/department of respondent within their organization to better uncover potential ICP attributes for our marketing team.

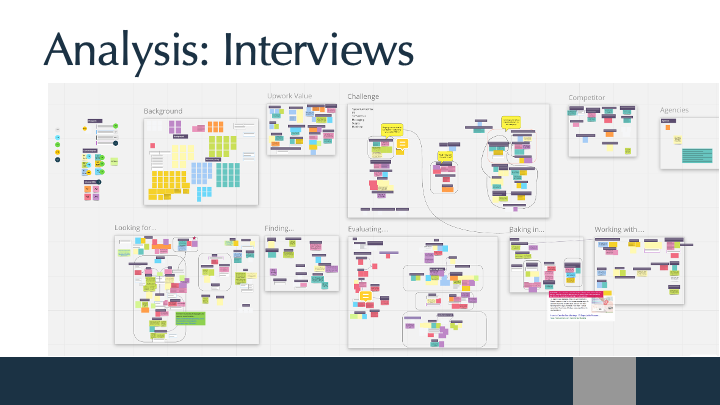

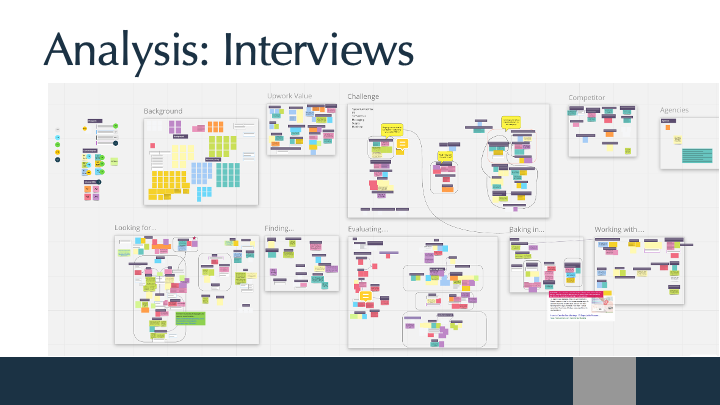

Thematic clustering of SME, prospect and buyer interviews

The analysis is structured by study question, then clustered by emerging themes. Color coding exact quotes by participant and then grouping by theme creates a quick visual heat map, without falling into the loudest-voice trap (all quotes/themes generated by the same person).

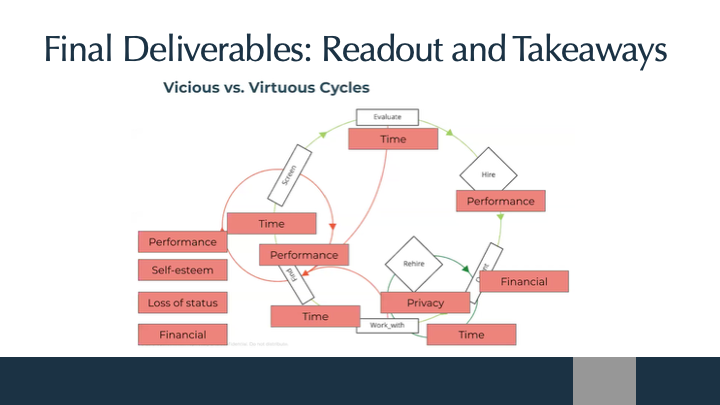

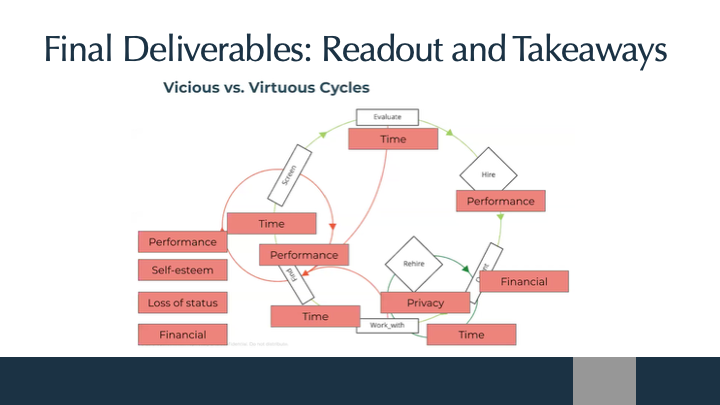

Sample from final readout - vicious vs virtuous cycles

This was animated in the deck. The virtuous cycle appeared first with an easy green loop, then all of the drop-off points came in at an accelerating pace with each risk-to-the-buyer clearly labeled. It was important for the team to not generalize “risk” here, as each barrier might need a different solution, or solving one easy problem might not change the overall equation. The team workshop dissected and prioritized what would be on the roadmap.

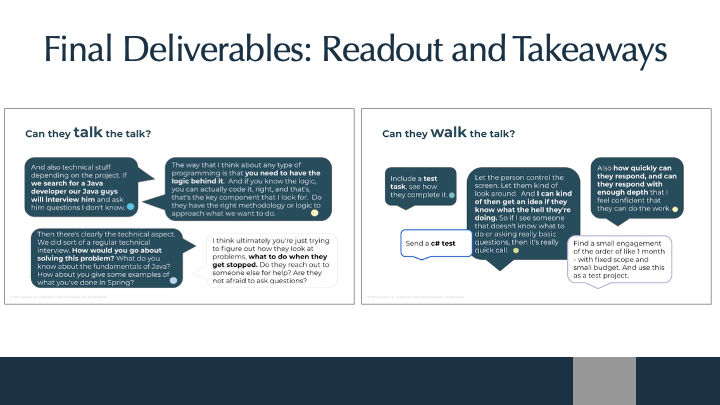

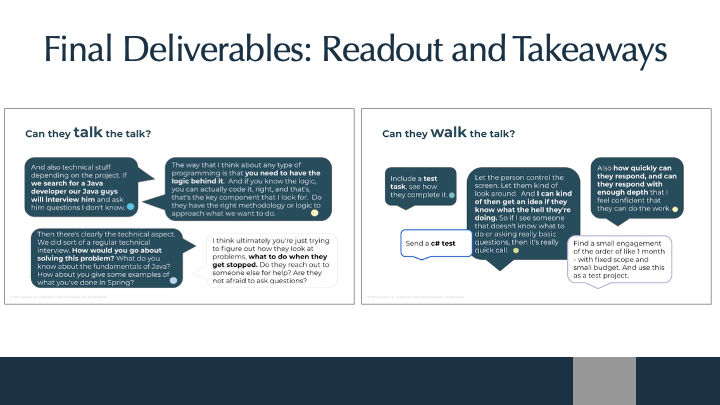

Sample from final readout - how quotes were used

Getting precise and explicit was critical for the readout’s audience, without getting too heavy. Consider this analogy: “Java is to Javascript like ham is to hamster.” Why did this matter? Over-summarization and a lack of technical clarity in prior research had impeded the team, so a combination of plain language and explicit quotes from the technical participants allowed both designers and engineers to share meaning for this vertical, without losing sight of the big picture for the marketing team.

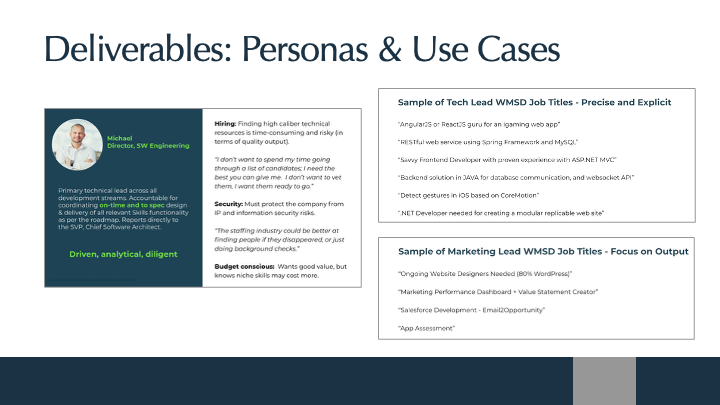

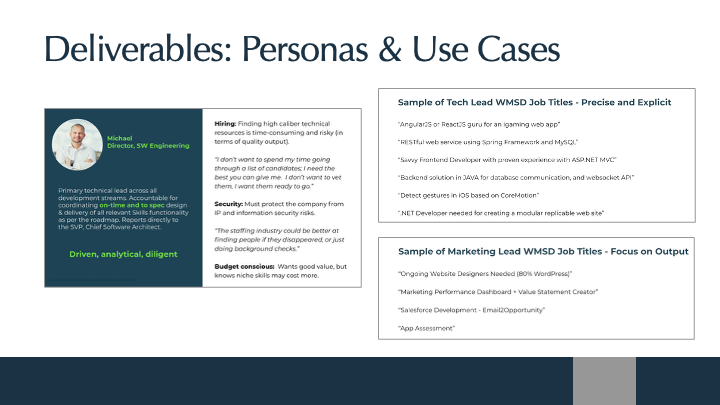

Sample deliverable - personas and use cases

Specifically for the marketing team, primary and secondary personas were created with existing successful use cases. Prior marketing over-simplified the category to “web development”, which attracted only uninformed and less valuable buyers.

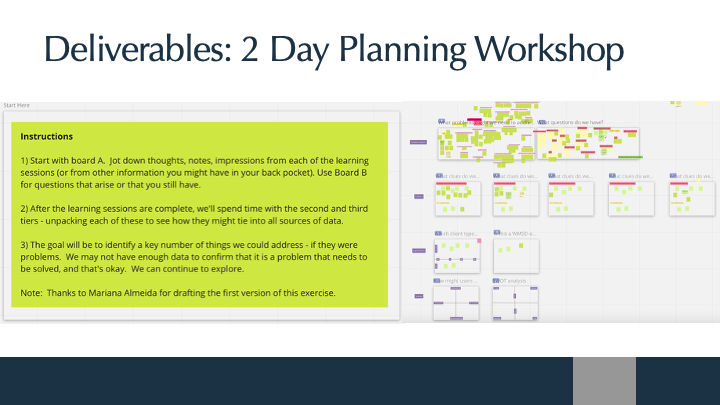

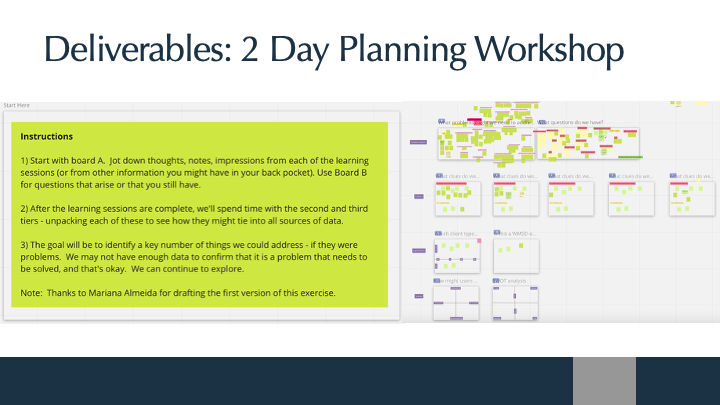

How did the team absorb all this information? Workshop the data!

For the core team responsible for setting strategy and the roadmap, we held a multi-day workshop to dive more deeply into findings from all tracks (vs. the summary readout). The Miro was active for the entire workshop and participants were encouraged to add notes, ideas and unanswered questions during and after each session.

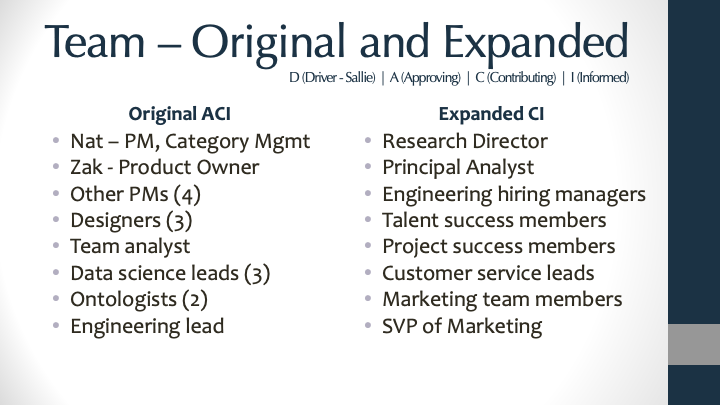

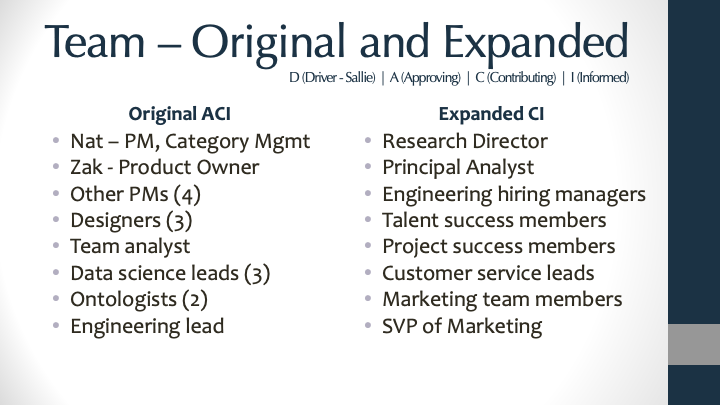

DACI team by role

This was one of the largest and compressed research agendas I’ve led, and its success was due to all of the participants who were engaged, curious, collaborative, responsive and excited about what we were learning. This study snowballed in interest and impact along the way, so a huge shout out needs to go to the lead PM Nat who not only was an invaluable partner and sounding board, but helped wrangle and engage everyone outside the core team.